Introduction

Going back in time to early 2000s, one can find a lot of businesses on “web hosting” or providing services directly from bare-metal servers. After all, the “cloud” only became a thing and gained traction in the last 5 to 10 years. Many such businesses survived to present day.

What was (and in many scenarios still is) the reality of being able to provide services from a colocated or on-premises bare-metal server?

-

The physical server had to be purchased before anything else; there were requirements on the enclosure size that sometimes prevented consumer-grade hardware from being used;

-

The operating system had to be installed by hand, from installation media;

-

The configuration was a tedious process, with many details being fixed throughout the days after going live.

This text is about doing backups for data already existing in AWS, not for outside data, although some methods apply for both cases. But let’s start from the beginning:

What Data?

Your data can be located on EC2 nodes (virtual servers) or you may be using some dedicated database service such as RDS. The dedicated services have the backup functionality built-in already, with settings easily accessible through the interface. I won’t deal with those but rather with the “raw” data you may have on a node.

The data on the node falls in 2 categories, or can be looked over from 2 different perspectives:

-

When one wants to capture the “system state” at a certain point in time. This perspective does not consider the data composition, but the functionality that is being captured for use at a later date as a known good fallback point.

-

When one wants to get the state of a specific subsystem (e.g. a subset of the local storage, a subset of the local database). This is the “classical backup” as it is widely known.

Capturing State

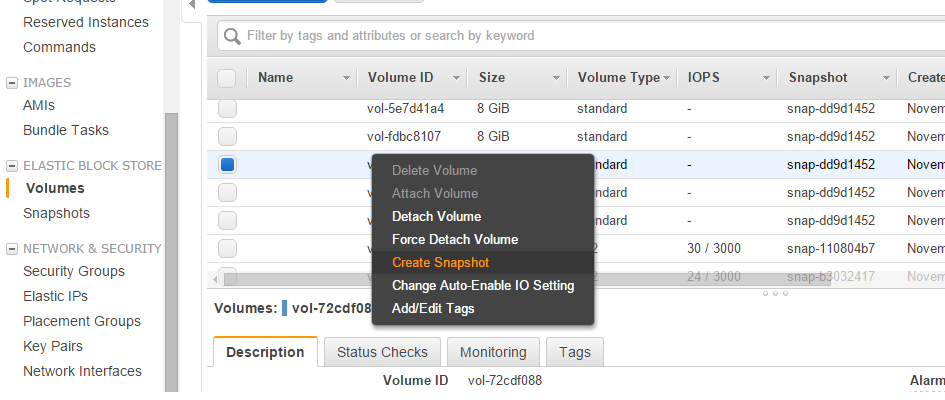

AWS offers full support for taking snapshots of volumes:

One does not need to only use the interface; all the functionalities are available programatically. One may also want to look over Boto Python library.

Classical Backup

One can store files through programatical means (e.g. from cron-based scripts to full fledged backup software that runs on a schedule) in the Amazon Cloud to the following services:

-

Simple Storage Service (S3): this is the easiest to use as it offers instant storage, instant retrieval and also versioning (e.g. you may mirror some directory contents on the secure storage at various points in time). It is not a cost effective method of storage for huge amounts of data (multiple terabytes) over long periods of time.

-

Glacier: this is the equivalent of the tape storage. The retrieval is not instant (one must schedule such retrieval in advance). It does not support versioning by default. It is 3-4 times cheaper than S3, though.

-

A dedicated EC2 node (or multiple nodes organized as a backup storage cluster): this is not cost effective but may work in certain scenarios (e.g. live data mirroring).

-

A dedicated database in RDS: this is far from cost effective but is the solution if one wants to use some existing backup software that can store data to a database only.

That was my introduction on doing backups in AWS. Thank you for your read!