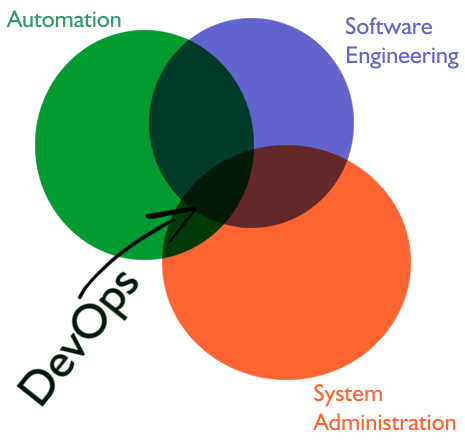

DevOps: some people think it’s a hype, a sysadmin job redefined, other people believe it’s just another skill you get from some training or out of school. It’s neither. I personally would say it’s a combination of skills; you get some from school, other from formal training and the rest from the daily hands-on job.

Why is it not just some SysAdmin job redefined?

-

A “classical” SysAdmin does not use complex automation tools. His/her usual job is to go from one node to the other and set configurations, install software and fix things that do not work;

-

A normal SysAdmin job does not (usually) involve coding, apart from small scripts that run on individual nodes;

-

The “classical” SysAdmin (usually) deals with physical nodes and a good part of his/her daily job is fixing storage issues (rebuilding file systems, restoring from backups), debugging various hardware incompatibilities and applying patches.

Why do (serious, formal) coding skills matter for a DevOps?

The current industry trend is to express infrastructure through Code. You can currenty spawn entire environments (e.g. in AWS) with properly sized hardware configurations and services installed through Code only; this is possible with Automation tools like Chef. This is not about simple scripting skills but there are no complex algorithms either.

As I have just mentioned, DevOps is defined through Automation as the node interaction is no longer almost entirely manual. One now runs commands to multiple nodes at once and employs various automated monitoring tools that raise alarms and (sometimes) trigger tasks that do fix those issues, including, but not limited to, scaling resources or even adding new nodes to some cluster.

Who needs DevOps skills? I think that any organization that employs a large computing environment, large enough that scaling through classical SysAdmin operations is no longer feasible or cost-effective. Or, for that matter, any organization that intends to move its infrastructure to the Cloud. Being a new position that cannot be put in the same category with Devs, SysAdmins or QAs, there may be difficulties in filling up the job requirements, the career path or even deciding the compensation, but things are starting to change.

This was my introduction to the DevOps concept. Thank you for your read!

If you know what Chef is, you may skip this one or actually stay for a (hopefully) interesting read. Either way, Chef is a tool that one may use for automating the node configurations – e.g. installed software, their configuration files, system configuration files, NFS mounts and so on.

When interacting with chef, one may realize that there are 3 main components involved:

- The Chef Server, which is actually just a big data repository.

- The Chef Client, which is installed and runs on endpoints and does all the “dirty work” such as changing files and installing packages. The client authenticates itself to the server by a public/private key mechanism.

- The Knife: this is the tool used by the sysadmins to actually do work with Chef.

What is stored in the repository known as Chef Server?

- Cookbooks;

- Data Bags;

- Environment and Node data.

A Cookbook is a small project written in Ruby. This project contains files for:

- Recipes – these are individual files that contain rules to be aplied on clients (e.g. install a rpm package, deploy a config file from a template). The file contents is plain Ruby code using constructs (calls to libraries) provided by Chef.

- Attributes – or, better phrased, default attributes (e.g. port numbers, sizes, paths etc).

- Templates – templatized configuration files with attributes replaced with template variables that are to be initialized in the recipe from default or user provided values.

These small projects are versioned and usually are also maintained – for development purposes – in an external repository (most likely git based). There is a system of includes and dependencies; starting some recipe installation will maybe trigger the installation of a full environment.

The “data bag” is an interesting concept: these are json data pieces stored inside the Chef Server. They can be encrypted, making them suitable for sensitive data such as passwords or private keys.

What is a (Chef) Environment? One may see it as a form of grouping nodes (servers). This allows for having shared, common data for all the nodes within a certain environment and also for running commands to groups of nodes identified by the environment they belong to. The (Chef) Node also has a data record associated with it that may override the inherited settings from the environment. Such data record (also json encoded) contains overrides for the default attributes described before and, on individual nodes, the recipe list to be applied (also called the “run list”).

As I have mentioned before, the effective work is performed with knife. The entire documentation is available on the Chef website:

From a DevOps perspective, the most frequent operations are:

- Modifying environment and node data;

- Uploading and downloading cookbooks without dependency checking; for dependencies there is another tool, berks;

- Running the same shell command on a group of nodes (query based).

Is it a fun tool? It sure is. But you know the saying, “don’t drink and drive”; in this context, due to the impact of individual commands, one must be extra careful (and most likely sober).

That’s it for a small introduction on Chef concepts. Thank you for the read and have a nice day!